The Problem Is the AI Strategy — or Rather, the Lack of One

By the time your organisation has finally settled the question of whether it needs an AI strategy, your employees have in all likelihood already built one. It may not be quite the one you had in mind — and unlike the official version, theirs is already running.

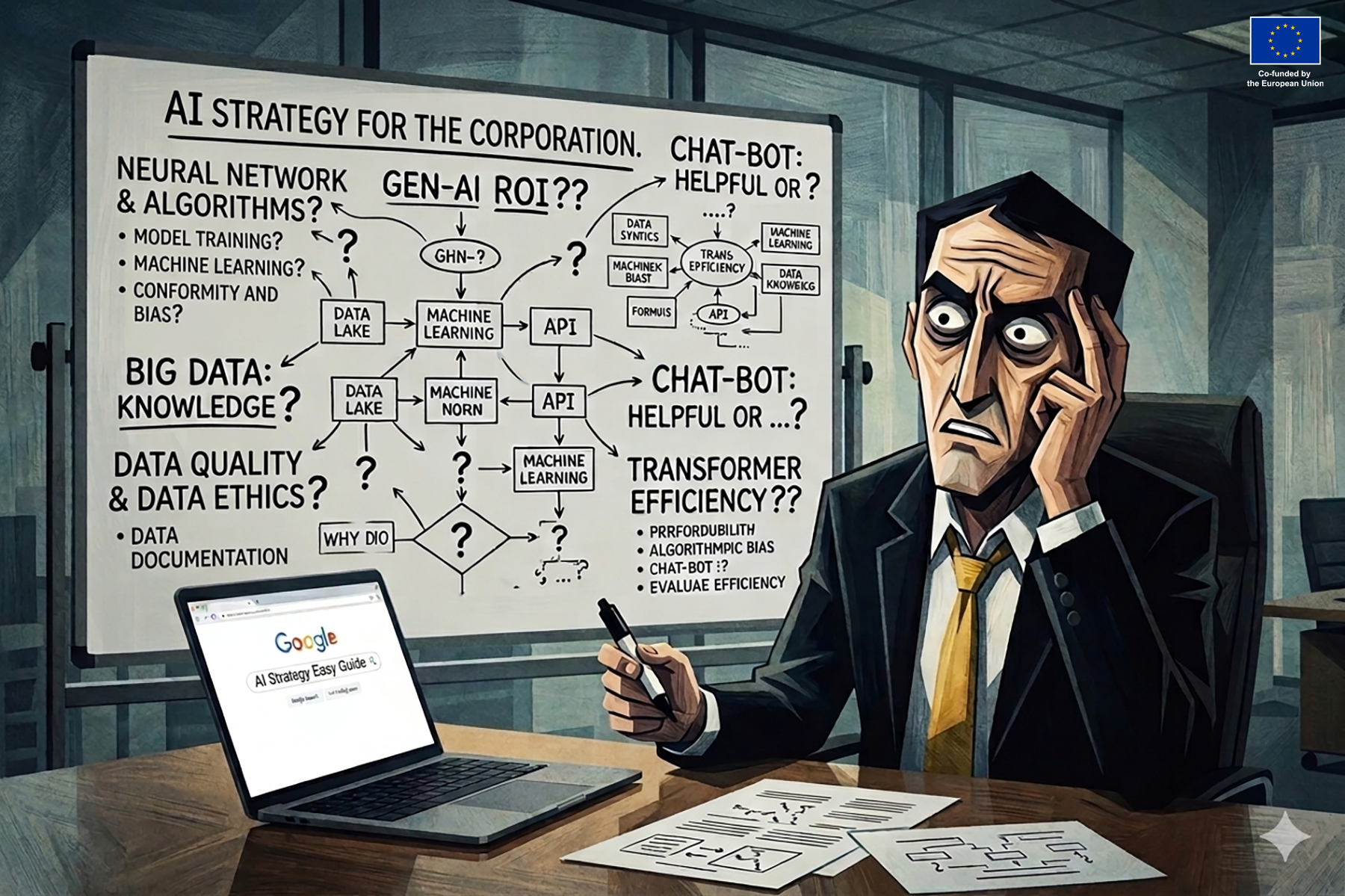

Martti Asikainen 15.4.2026 | Photo by created with AI

Picture a typical Monday morning. An account manager at a mid-sized consultancy pastes three pages of meeting notes into ChatGPT — notes containing client names, budget figures, and a partner’s rather candid assessment of the client’s internal politics.

The summary is ready in 40 seconds. The report goes out at 11:58.

Two floors up, the legal team is summarising contract documents with an AI tool a neighbour happened to recommend at the weekend. HR, meanwhile, has quietly found something rather useful for screening CVs. Nobody told them to. But then, nobody told them not to either. The tools appear to work well enough, and nothing goes visibly wrong.

The client is satisfied. The partner remains none the wiser. The account manager repeats the exercise the following week, and the week after that. So does the rest of the organisation. By the time someone finally gets round to taking stock of which AI tools are actually in use, the workflows are thoroughly established. Sensitive data has left the building dozens of times.

This is a hypothetical scenario, of course. It is also, to a greater or lesser degree, the current situation at rather a lot of companies. A coherent AI strategy is absent, and the longer it stays that way, the more difficult things become to manage.

The Strategy Vacuum Does Not Stay Empty

Many organisations treat the absence of an AI strategy as a neutral position — a sensible pause before the final decision is taken. In practice, it is nothing of the sort. The vacuum fills immediately, and it fills with whatever individual employees find useful, affordable, and accessible.

Research from KPMG found that roughly half of employees use AI tools without their employer’s permission, and 44 per cent knowingly disregard company guidelines in order to improve their own working arrangements. Nearly as many upload sensitive company information to public AI platforms (KPMG 2025). This is not, on the whole, done carelessly; it is done because the tools are genuinely useful and no sanctioned alternative has been offered.

This is less a compliance problem than a structural one. When management neither provides tools nor offers guidance on how AI might be used responsibly, employees do not simply stop. They continue — invisibly, without guardrails, and without any shared sense of where the risks might lie.

The phenomenon is generally referred to as shadow AI, and it tends to be discussed in terms of cybersecurity and data protection (Khan & Asikainen 2026). It is both of those things; but it is also something rather more telling. Employees reaching outside official channels are, by and large, trying to solve genuine problems with the resources available to them. The organisation has simply elected not to be amongst those resources.

The Longer You Wait, the Harder It Gets

Delaying an AI strategy is considerably more than a missed opportunity. Shadow AI does not stand still whilst the strategy is being deliberated upon; it compounds quietly. Every week without a framework is a week in which new workflows become entrenched, tool dependencies accumulate, and habits solidify.

A marketing team that has spent six months working with a particular AI tool has, by now, built its entire content pipeline around it. Asking them to abandon it — or to replace it with something officially sanctioned — is no longer a straightforward policy decision. It has become a change management exercise, and a rather delicate one at that. You are no longer introducing something new; you are asking people to relinquish something that has made their working lives noticeably easier, with no particular guarantee of anything better in return.

The same dynamic plays out across every department. Each develops its own informal AI culture, its own preferred tools, its own workarounds. By the time the official strategy is finally produced, the organisation is not working from a blank canvas. It is attempting to impose order on territory that has already been occupied — unevenly, inconsistently, and without any of the governance frameworks that a properly constructed strategy might have provided from the outset.

This is not, however, a reason for despair. It is a reason to begin now, and to build a strategy that takes the actual state of affairs as its starting point, rather than the state the organisation might prefer to imagine itself in. Time, at this stage, is rather against you.

The Investment Trap

Deloitte’s 2026 State of AI in the Nordics report gives the problem a useful degree of concreteness. It finds that 76 per cent of Nordic organisations plan to increase AI investment substantially this year; whilst, in the same period, strategic preparedness has fallen from 61 per cent to 43 per cent, and talent preparedness has dropped rather more sharply, from 33 per cent to just 14 per cent (Deloitte 2026). Spending is accelerating at precisely the moment when readiness is in retreat.

The report’s explanation is fairly straightforward. Organisations that deployed off-the-shelf generative AI tools acquired, in the process, a somewhat misleading sense of their own preparedness. Operational integration then made plain that deploying tools and actually developing the workforce’s capacity to use them are rather different problems. The infrastructure, it turned out, was never the hard part.

The situation is not helped by a conspicuous absence of accountability. Only 20 per cent of Nordic organisations have appointed anyone to take responsibility for measuring whether AI initiatives are actually delivering value, against 32 per cent globally (Deloitte 2026). In four out of five Nordic organisations, in other words, nobody is formally answerable for whether any of it is working. Investment without accountability is not, strictly speaking, a strategy. It is optimism with a budget allocation.

The Strategy That Arrives Too Late

There is a particular failure mode worth identifying clearly, if only so that others might avoid it. It tends to afflict organisations that, having delayed the development of an AI strategy for some time, eventually compensate by producing something suitably comprehensive, formal, and rather slow in arriving. A committee is convened. A framework is drafted. Consultants are engaged. The resulting document is thorough, carefully worded, and more or less entirely disconnected from what employees are actually doing.

This sort of strategy does not, in practice, displace shadow AI. It exists alongside it, largely unread, because it fails to engage with the specific tools people are using, the specific workflows they have developed, or the specific problems that led them to reach for an unsanctioned AI tool in the first place.

The question, then, is not simply whether a strategy exists, but whether it reflects the organisation’s actual situation or merely its preferred self-image. Most organisations that have fallen into this pattern already know the answer, if they are candid with themselves. The document exists. The committee convened. The framework was duly approved. And yet the person in legal is still using the tool their neighbour recommended. Knowing is not, in itself, the difficulty. Acting on what one knows is another matter entirely.

The People Who Already Know the Answers

One of the more consistent failings in AI strategy processes is the exclusion of employee experience from the exercise altogether. Employees who have been using AI tools through informal channels have, in the course of doing so, already carried out a good deal of the investigative work that any serious strategy would need to undertake — if only someone thought to ask them.

They know which tasks are genuinely improved by AI assistance, and where the model produces something that sounds rather plausible but still requires a human being to catch the errors. They know which tools actually suit their working patterns, and which ones proved rather less impressive in practice than in the demonstration. They know where the time savings are real, and where they quietly evaporate.

This knowledge is almost never gathered systematically. It resides in individual workflows, shared folders, and Slack threads amongst colleagues who have worked something out and are passing it along informally. The formal strategy process, meanwhile, tends to involve senior leadership, a technology function, and perhaps one or two external consultants — individuals who have, more often than not, done considerably less hands-on AI work than the staff they are setting out to govern.

Excluding employee experience from strategy development is not merely a missed opportunity; it constitutes a governance error in its own right. A strategy built without such input rests on assumptions about how work is done, rather than how it actually is done. The particular irony is that the same people whose informal tool use represents the problem are also the most direct route to its resolution.

The Turn Most Strategies Miss

The conventional response to this sort of challenge is to reach for a framework: audit existing usage, categorise the risks, revise the policy, run some training. All of that is necessary. None of it, on its own, is quite sufficient. AI governance constructed primarily around risk mitigation tends to produce environments in which employees are perfectly clear about what they are not permitted to do, and rather vaguer about what they are.

A list of prohibited platforms and a clause about sensitive data constitute a constraint system, not a capability system. They address the more visible risks whilst leaving the underlying dynamic largely intact. The Deloitte findings illustrate this rather neatly. Amongst Nordic organisations where at least 40 per cent of the workforce has access to approved AI tools, that proportion grew from 37 per cent to 56 per cent in a single year (Deloitte 2026).

A considerable increase — and yet, in the same period, both strategic and talent preparedness declined sharply. Broader access, in the absence of capability, ownership, and strategic clarity, is not enablement. It is proliferation, and shadow AI proliferates along with it. The organisations that tend to manage this rather better are those that produce sanctioned alternatives genuinely superior to the unsanctioned ones: tools that meet employees where they actually are, and that handle the data governance questions without placing the full burden of judgement on the individual.

The Cost of Waiting, and What Follows

The decision to defer an AI strategy is generally presented as prudence — a responsible choice to wait until the technology is better understood, the regulatory picture is clearer, or some internal consensus has been reached. What this framing tends to obscure is that deferral carries its own costs, and they are not hypothetical future ones. They are being incurred at present: in data that has already left the organisation, in decisions taken on the basis of AI outputs that no one has verified, and in a growing divergence between the organisation’s official position on AI and what is actually happening.

Shadow AI incidents cost organisations considerably more to resolve than standard security incidents, largely because of the time required to establish what data was involved and who had access to it (Zorz 2026). The EU AI Act, which becomes fully enforceable in August 2026, places explicit obligations on organisations to ensure appropriate AI literacy and governance arrangements (EU 2024/1689). Meeting those obligations is, frankly, not straightforward for an organisation that has yet to develop a functioning strategy.

The Nordic picture makes the wider stakes rather apparent. According to Deloitte, Nordic organisations compare favourably with global peers on technical infrastructure; yet their strategic and talent readiness are declining simultaneously (Deloitte 2026). This suggests, fairly strongly, that technical capability does not of itself produce strategic coherence. It simply makes the gap between what an organisation can do and what it has decided to do rather more expensive to sustain.

The next time someone in a meeting remarks that the organisation’s AI position is still being worked through, it may be worth asking a rather simpler question: what is everyone actually doing in the meantime? You may well already know the answer. The more pertinent question is whether the others around the table — the ones shaping the strategy — know it too.

References

Deloitte. (2026). State of AI in the Nordics: Deloitte’s State of AI in the Enterprise report series — Nordic cut. Deloitte AI Institute.

European Union. (2024). Regulation (EU) 2024/1689 of the European Parliament and of the Council laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). Official Journal of the European Union.

Khan, A. U. & Asikainen, M. (2026). How (Not) to Destroy Your Business with AI. Finnish AI Region. https://www.fairedih.fi/en/2026/02/05/how-not-to-destroy-your-business-with-ai/

KPMG. (2025). Trust, attitudes and use of artificial intelligence: A global study 2025. KPMG International.

McKinsey & Company. (2025). The state of AI in 2025: Agents, innovation, and transformation. McKinsey Global Institute.

NIST. (2023). Artificial intelligence risk management framework (AI RMF 1.0). National Institute of Standards and Technology.

Zorz, M. (2026). AI went from assistant to autonomous actor and security never caught up. Help Net Security. https://www.helpnetsecurity.com/2026/03/03/enterprise-ai-agent-security-2026/

Authors

Martti Asikainen

Communications Lead

Finnish AI Region

+358 44 920 7374

martti.asikainen@haaga-helia.fi

Finnish AI Region

2022-2025.

Media contacts